Plagiarism in the Age of Generative AI

What Needs to Change?

Plagiarism has always been a moving target. From handwritten essays copied from textbooks to Ctrl+C and Ctrl+V from Wikipedia, every new medium brings a new method for cutting corners. But now, we’ve entered an entirely new era—one where students can generate an entire essay in seconds using tools like ChatGPT, Claude, or Gemini. The rules of the game have changed. So should our approach to academic integrity.

The New Shape of Plagiarism

In traditional plagiarism, someone copied someone else’s work, often verbatim. It was relatively easy to spot and easy to prove. With generative AI, however, a student can produce entirely original text that still wasn't written by them. It's not copied from a source—it’s fabricated by a machine. Technically, there's no source to cite. So is it still plagiarism?

This is where things get tricky. AI output isn’t plagiarism in the classic sense, but it can be dishonest if it's passed off as a student's own thinking or effort. It shifts the problem from one of content theft to one of intellectual outsourcing. The issue is no longer "who wrote this?" but "how was this created?"

The Problem with Current Detection

Some institutions have leaned on AI detectors to try and catch AI-generated content. But this is a losing battle. AI detectors are notoriously unreliable. They often flag fluent, native-level writing as "suspicious" while missing cleverly prompted AI work entirely. Worse, they penalize students who write well or who use grammar tools to improve their English.

We're trying to fight AI with more AI—using opaque probability scores to police an already blurred boundary. This approach is not only flawed but also risks harming innocent students and creating a culture of fear.

What Needs to Change

If we want to maintain academic integrity in the age of generative AI, we need to rethink more than just our detection tools. We need to rethink our entire system of assessment and trust.

Here’s what needs to change:

1. Assessment Design

We must stop relying on generic, open-ended essays that are easy for AI to generate. Instead, we should:

Design prompts that require personal reflection, experience, or local context.

Break assignments into multiple steps with drafts, outlines, and revisions.

Use oral presentations, peer reviews, or live Q&As to verify understanding.

2. Clearer Guidelines

Schools and universities must define what is and isn't acceptable AI use. Is it OK to brainstorm with AI? Use it to fix grammar? Generate ideas? Write a full draft? Without clear policies, students are left guessing.

3. AI as a Learning Tool, Not a Shortcut

Instead of banning AI tools, educators should teach students how to use them well. The goal isn't to stop students from using AI, but to ensure they still learn critical thinking, research, and writing skills along the way.

4. Authenticity Over Originality

We should care more about whether a student understands the content than whether every word is original. Authentic engagement—explaining, critiquing, connecting ideas—is much harder to fake than filling a page.

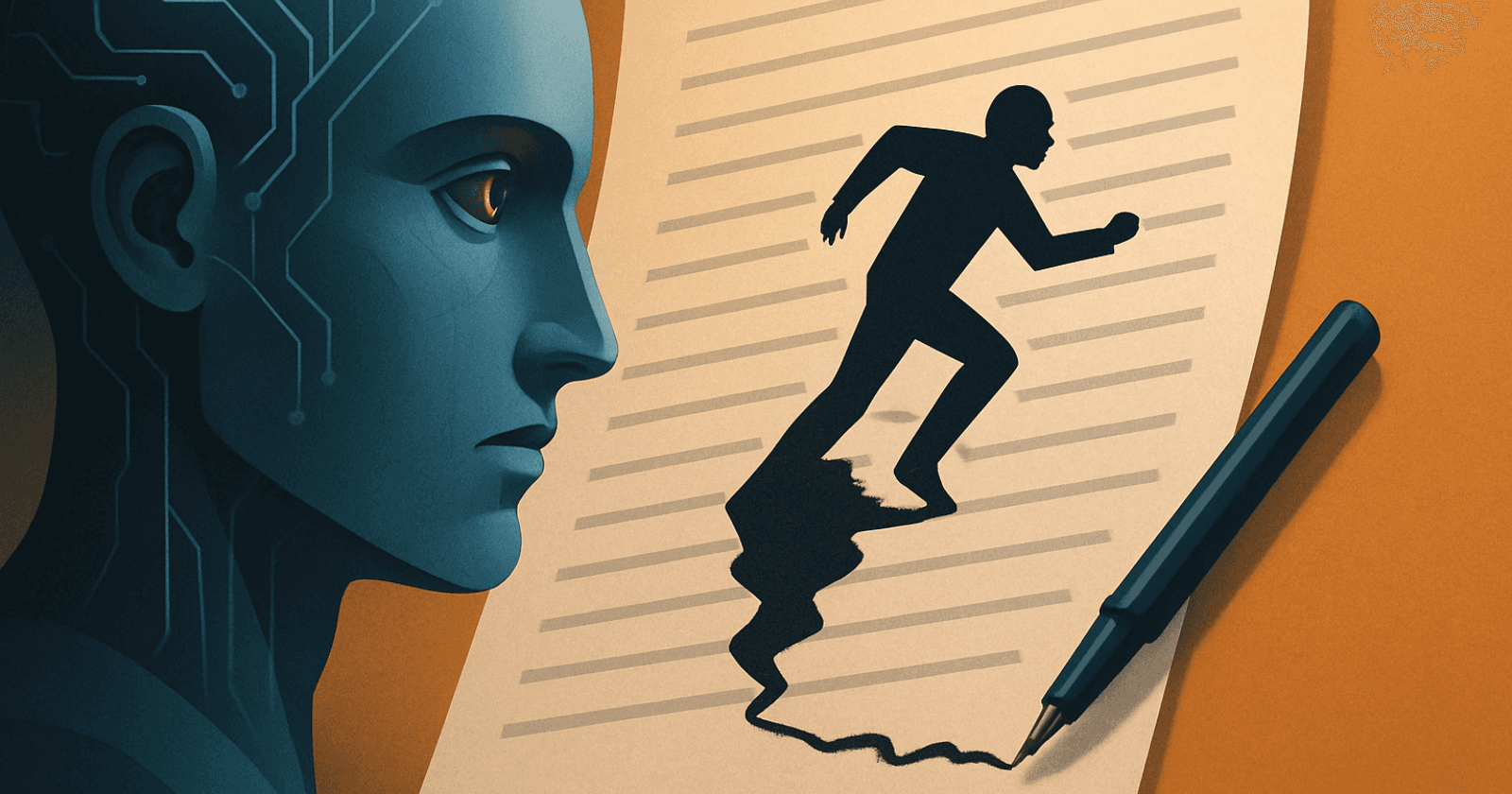

A New Kind of Integrity

Generative AI isn’t going away. Students will use it in school, and they’ll use it even more in their careers. Our job isn’t to block access, but to build a culture where tools are used transparently and ethically.

Academic integrity in this new era isn’t about hiding AI use—it’s about being honest, responsible, and engaged in the learning process. Plagiarism may not look the same anymore, but the core principle remains: do your own thinking.